Starcraft brood war ai difficulty

#Starcraft brood war ai difficulty Offline

įigure 2 classifies different batch RL algorithms based on interaction perspectives into offline and online algorithms. So batch reinforcement learning algorithms aim to achieve the utmost data efficiency through saving experience data to make an aggregate batch of updates to the learned policy. The learning system concern then is to derive an optimal policy out of the given batch of samples. Batch RL is mainly used, where the complete amount of learning experience, usually a set of transitions sampled from the system, is fixed and given a priori. īatch reinforcement learning (BRL) is a subfield of dynamic programming (DP) based reinforcement learning that recently has immensely grown.

Reinforcement learning concentrated more on finding a balance between exploration of anonymous areas and exploitation of its current knowledge.

Reinforcement learning assists agents to discover which actions yield the most reward and the most punishment after trying them through trial-and-error and delayed reward.

#Starcraft brood war ai difficulty how to

Reinforcement learning (RL) is learning what the agent can do and how to map situations to actions in order to maximize the numerical reward signal. To learn the best action selection the agent needs to explore the state-action search space and, from the rewards provided by the environment, the agent can calculate the true expected reward when selecting an action from a state. However, agents may have no prior knowledge on what the right or optimal actions are. IntroductionĪn agent is anything that can be viewed as perceiving its environment through sensors and acting in that environment through actuators as in Figure 1, while a rational agent is the one that does the right thing. The LSCAPI evaluation proved superiority over LSPI in time, policy learning ability, and effectiveness. The LSCAPI was implemented and tested on three different games: one board game, the 8 Queens, and two real-time strategy (RTS) games, StarCraft Brood War and Glest. So in this paper we proposed a new algorithm based on LSPI called Least-Squares Continuous Action Policy Iteration (LSCAPI). Many algorithms were proposed to solve this problem such as Least-Squares Policy Iteration (LSPI) and State-Action-Reward-State-Action (SARSA) but they mainly depend on discrete actions, while agents in such a setting have to learn from the consequences of their continuous actions, in order to maximize the total reward over time.

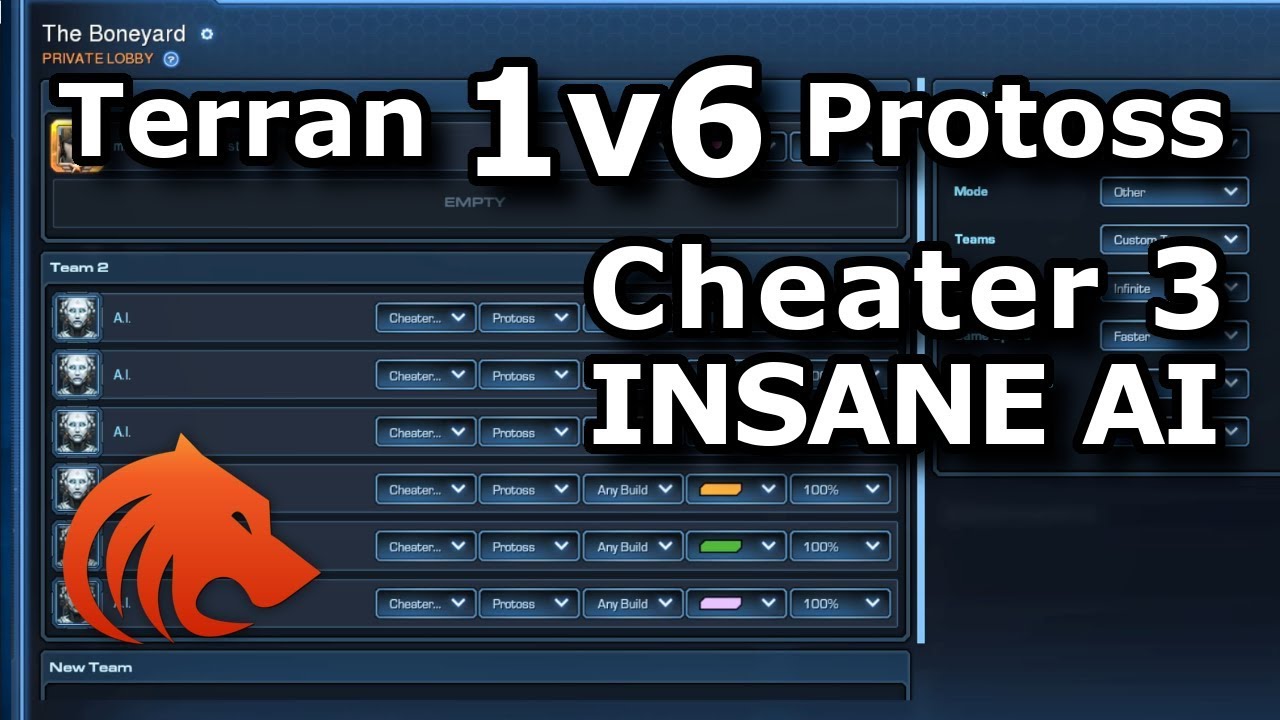

How the game responds to opponent actions is the key issue of popular games. With the rapid advent of video games recently and the increasing numbers of players and gamers, only a tough game with high policy, actions, and tactics survives.